The AI-Native Leader, Part 2: Stop the Playbook. Start the Harness.

Part 2 of "The AI-Native Leader." In Part 1, I argued that the AI era needs system thinkers. Now let's talk about what that system actually looks like.

I keep seeing the same thread pop up in every leadership forum I’m in: can anyone share an AI adoption playbook that worked?

Some of it is pressure from the top — executives want an AI efficiency story yesterday. Some of it is the quieter kind: the anxiety of watching everyone else move and not knowing if you’re behind. The reality is that nobody knows what success actually looks like yet. So they reach for a playbook.

Wrong instinct. Nothing here is stable enough to write a playbook for. Models change monthly. What worked in Q1 doesn’t hold in Q2. Every “best practices” doc is a historical artifact by the time it ships.

You don’t need a playbook. You need a harness.

What an agent harness actually is

If you’ve ever shipped an AI agent — a system that calls tools and takes actions, not just answers questions — you’ve probably heard the discipline growing up around it: harness engineering, the layer beyond prompt and context engineering. The raw model is powerful but unreliable. What makes it useful in production isn’t the model itself. It’s the layer wrapping it: prompts that set context, tools that extend capability, validators that catch bad output, feedback loops that sharpen it over time, guardrails for when something goes wrong.

That wrapping is the harness. It’s a living system — you tighten it when something breaks, loosen it when you’ve seen it hold, re-tune it when the underlying model changes. The model gets better on its own. The harness is what you build.

The craft you already have

Here’s what clicked for me:

A great manager has always been a harness. You just didn’t call it that.

Think about what a good manager actually does. They set context so the team understands the goal. They connect people to the tools and information they need. They catch problems before they ship. They give feedback that helps the team get sharper. They expand autonomy as trust builds — first supervised, then trusted, then autonomous. When something breaks, they tighten the harness and rebuild trust.

Prompts. Tools. Validation. Feedback loops. Evolving boundaries.

The craft of harnessing isn’t new. The thing you’re wrapping is.

The Four Modes of AI-Native Execution

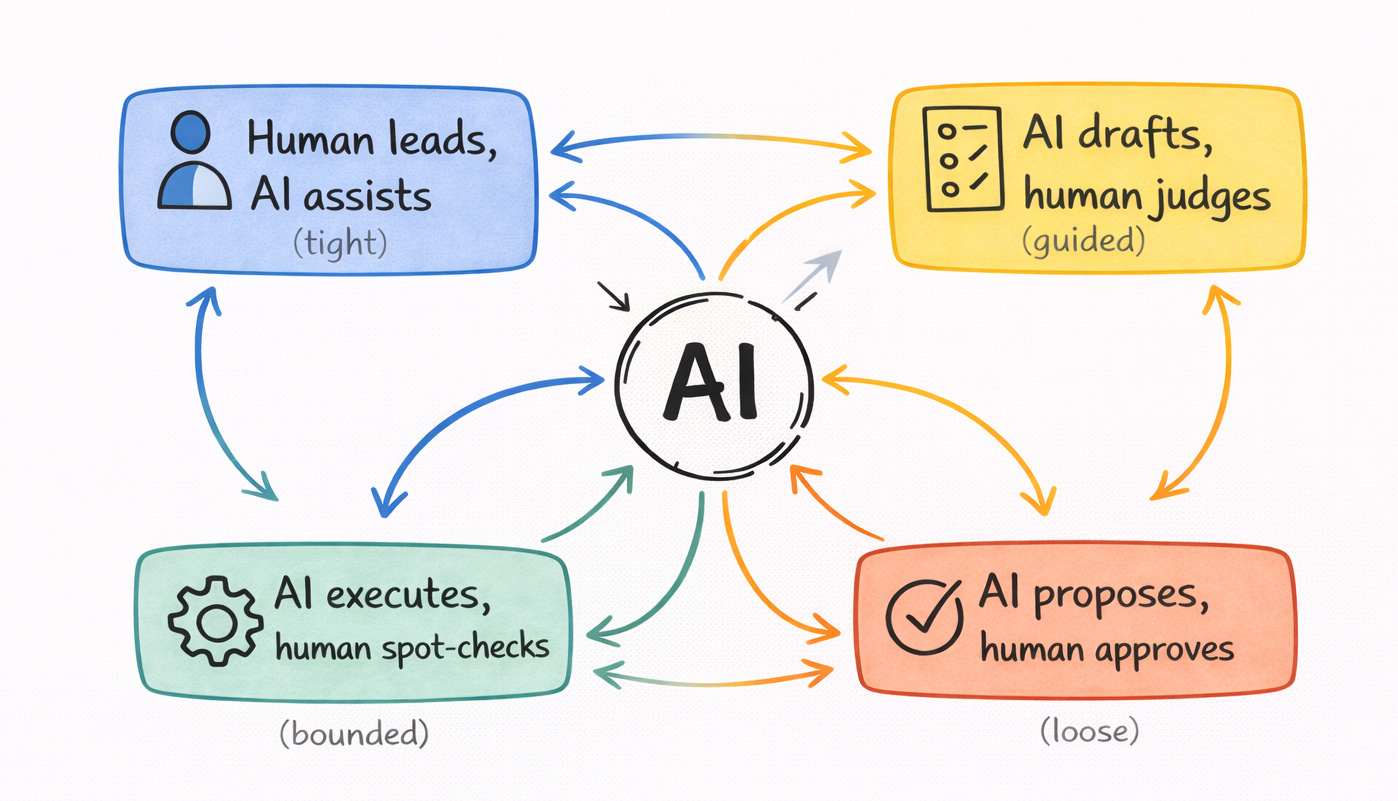

This is how we harness our team and AI agents at my startup — and the pattern I keep recognizing in the teams I heard feeling it is working. For any piece of work, there are four ways the harness can be set — four modes of how tight the boundary is between human and AI. Most teams are already operating in some version of these. They just haven't looked at it as a system yet.

Human leads, AI assists. The work is novel. The judgment is ongoing. A person has to drive — deciding what questions matter, what good looks like, what’s signal versus noise. AI is a powerful partner during this exploration: it pulls data fast, surfaces patterns, generates options. But a human is in front. Example: pivoting — choosing what to build next; running a feasibility test with a prototype; org and role design.

AI drafts, human judges. The pattern is clearer but quality still needs human eyes. AI does the heavy lifting of a first pass — a draft, an analysis, an implementation. A human evaluates: is this actually good? Does it capture what matters? What’s missing? Example: a performance review draft the manager edits heavily; a comms plan for a product launch; a market analysis.

AI executes, human spot-checks. The pattern is proven. The quality bar is clear. AI runs the work; a human keeps an eye out for drift. Example: code for well-understood, patterned work; operational review preparation.

AI proposes, human approves. This bucket is narrower than it sounds. It’s not for open-ended judgment — that’s mode one. It’s for work where AI has already done the job, but the consequences of shipping it wrong mean a human needs to say “go.” Example: sending a mass customer email; large refunds or vendor payouts above a threshold; regulatory-adjacent submissions where sign-off is required.

Notice what these four aren’t: a classification system. A workflow isn’t “in” one of these modes forever. The mode is the current harness setting for that work right now.

Migration is the real work

Here’s the part most playbooks miss completely.

Take AI-assisted code. Eighteen months ago, most engineering teams had it squarely in AI drafts, human judges — AI generated the code, a human read every line carefully because you couldn’t trust it. Today, for a lot of teams, routine code has migrated toward AI executes, human spot-checks — the model is reliable enough, the review is lighter, and you’re mostly watching for drift. For novel architectural work, it might still be human leads, AI assists. Same technology. Same company. Different modes for different kinds of code — and the boundaries have moved in just eighteen months.

A harness isn’t a one-time design. It evolves — and not always deliberately. A team in AI drafts, human judges can drift into AI executes, human spot-checks without anyone deciding: review started feeling slow, nobody flagged the shift, and the mode on the whiteboard stopped matching the mode on the ground. Teams often think they’re operating in one mode when they’ve quietly moved to another.

This migration — the deliberate kind and the drifting kind — is where system leadership actually happens.

Loosening the harness — moving work toward more AI autonomy — should happen when:

You’ve seen the pattern work across enough reps

Your team has developed judgment about what “good” looks like here

You have a way to catch it when something drifts

Tightening the harness — moving work back toward more human involvement — should happen when:

The output quality dropped and nobody flagged it

A model or context change broke something that was stable

The stakes of the work changed

The real question isn’t whether your team is checking in on AI. It’s whether you can see the judgment your team is quietly adding. Pick one workflow. Ask two or three of the people running it what they override, correct, or rewrite before AI’s output ships. That’s your real harness — not the one on the whiteboard. That gap — between the harness on the whiteboard and the one actually running — is where things start to break. I’ll come back to that in Part 3.

Two harnesses, one craft

Here’s where most leaders stop — at harness #1, the one around AI.

But an AI-native org isn’t just an org that uses AI. It’s one where the shape of work has changed. AI handles a growing share of execution. People operate at higher judgment — strategy, vision, frontier exploration, the work that used to belong to the layer above them. That doesn’t happen on its own. The team needs a harness for it too.

This is harness #2: the one you build to pull people upward into the work you used to do. Same craft — context, tools, feedback, evolving boundaries — aimed at growth instead of execution. Share the reasoning behind decisions so people can make calls at that level. Expose them to the strategic conversations and customer signal that sharpen judgment. Give feedback on how they’re thinking, not just what they decided. Gradually expand the decisions they own.

So the true AI-native leader should be running both migrations at once. AI migrating toward more autonomy. People migrating toward more judgment, freed up by what AI is absorbing below them. Both the same craft. Both evolving.

I’ll say what I’ve been watching out loud. At the CEO and founder level, the recent decisions speak for themselves — aggressive cuts, leaner teams, all-in bets on AI productivity. The pressure is real: boards want an efficiency story, competitors are signaling hard. Harness #1 is getting all the urgency and budget. Harness #2 has quietly slipped off the roadmap. Hire less. Ship more. Prove the AI number is going up. Some of this is strategy. Some of it is pressure wearing strategy’s clothes. Either way, the work of developing the people who actually run the system isn’t happening. I hope there’s a correction coming — once leaders feel the cost.

This is where the real cost hides. Move only harness #1 and the org gets more productive — the team does the same work faster. But the judgment work that would actually move the needle is still sitting a layer above them, untouched. Talent is underused. You get a more efficient team, not a more capable one. A productive org, not an exceptional one. And that’s how you lose. The leaders pulling ahead are running both migrations — turning AI leverage into people leverage, not just into speed.

In Part 3, I’ll dig into why that cost stays hidden — the failure modes I keep seeing when the harness drifts or quietly breaks. You can build the right system and still not know it’s failing, because the metrics most organizations are watching won’t catch it.

Next: Part 3 — how the harness breaks, and what to measure instead.

About Amy Wu

I’m an executive and life coach who works with leaders navigating inflection points — including the one AI is creating right now. If you’re navigating something like what I’m describing and want a thought partner for it, I’d love to hear what you’re seeing.